The combined expertise of Stability AI, Runway, and CompVis paves the way for innovative text to image models.

Stable Diffusion, an advanced image generator that creates stunning visual representations based on text descriptions, was launched in 2022. Created by company Stability AI in partnership with researchers and organizations to change the way people make images.

Its versatile applications and user-friendly interface make it accessible to anyone with a computer equipped with a decent GPU. They have developed a new model called Stable Diffusion XL (SDXL) that is excellent at creating lifelike image. The company promised SDXL will get an open source release in the near future.

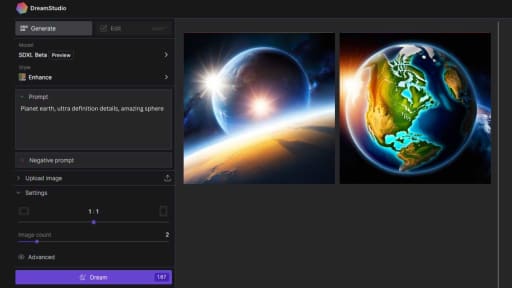

It can also generate different versions of an image and fill in missing parts. It even works in apps like Dream Studio and NightCafe Studio. The company wants to share their program with as many people as possible.

They hope that many people will use this ai model to create amazing images. If you want to learn more, visit their website at https://stability.ai

Development of Stable Diffusion

The development of Diffusion model was spearheaded by Patrick Esser from Runway and Robin Rombach from CompVis. Stability AI is the company that provided funding for the project and collaborated with other partners. First famous image prompt of a professional photograph of an astronaut riding a horse was made with stable diffusion model 2.

Partners like as Eleuther AI and LAION, to ensure the platform's success. In the future, they aim to develop more apps and services based on Diffusion model, further expanding its capabilities and reach.

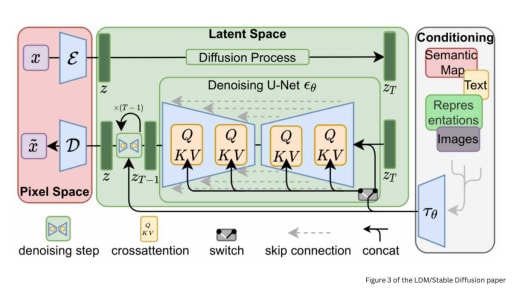

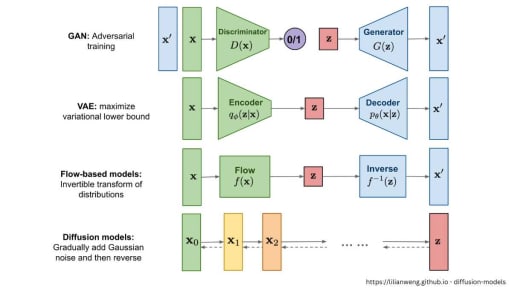

Technology

Stable Diffusion utilizes cutting-edge technology to generate image accurately based on user input. The platform uses a tool to understand what the user writes and a smart system to create really good pictures. Similar to its competitor Midjourney. As more people use it, the system gets better at making image that match what the user wants.

Training Dataset

The image generator model, was trained using a vast amount of data collected from the internet. Researchers compiled image and their corresponding descriptions to teach the AI what various objects and scenes look like. This comprehensive training data enables the platform to understand and interpret user image input effectively.

Capabilities

It has many fun features, like creating new image with text, changing parts of existing image, and fixing broken image. The new version, Stable Diffusion 2.1, has even more tools and features for users to be more creative. The Version 2 models was line trained, using a text encoder (OpenCLIP)

End-user Fine-tuning

Users have the option to fine-tune Stability AI's Stable Diffusion to optimize its performance for their unique needs. For instance, they can create image in a specific style, emulating their favorite artist, or even adopt a trending visual style. By experimenting with various settings and adjustments, users can generate truly one of a kind image that capture their imagination.

Usage and Controversy

There's been a debate about who owns the image made using Stability AI's image generator model. Some folks worry that because the platform learns from pictures found online, it could use copyrighted image without permission. Meanwhile, Stability AI says people keep ownership of the pictures they make with Stable Diffusion.

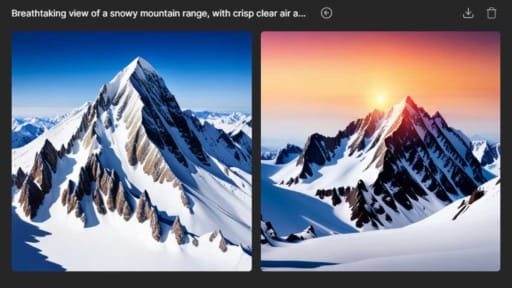

How to Build a Good Prompt

The first step to generating AI artwork image with Stability AI Diffusion is to build a good image prompt. A prompt is a text description that the AI model uses to generate an image. A good prompt should be detailed and specific, and should include powerful keywords. Keywords can be things like artist names, art styles, and art mediums. The more specific and detailed your image prompt is, the better your resulting image will be.

- A good image prompt should cover most, if not all, of these areas:

- Subject (required)

- Medium

- Style

- Artist

- Website

- Resolution

- Additional details

- Color

Inpainting

Inpainting is an important technique for fixing small defects in generated AI image. In this part of the guide, you will learn how to use inpainting to fix defects. Inpainting is easy to use, and can be done using the SD GUI. For Image Inpainting with Stable Diffusion you can aslo download the SD 2.0-inpainting or Stable Diffusion 2.1 checkpoint and run it in python.

Inpainting is a technique that involves filling in missing or damaged parts of an image. It can be used to fix defects in generated AI image, such as small artifacts or missing features.

Models

This fine tuned Generative AI model has several base models available that you can use to generate image. Each model has its own strengths and weaknesses, and it is important to choose the right model for your needs. Custom models can also be trained from the base models, allowing you to generate image with a specific style or object.

How to Start Generating Image

There are two main ways to start generating image with Stable Diffusion: using an online generator or using an advanced GUI. For beginners, it is recommended to start with a free online generator. However, if you outgrow the functionalities of the online generator, you can use a more advanced GUI.

Integration

API can be found in the user's DreamStudio account. The API offers different versions of its operations, including stable production-ready versions, stable versions preparing for production release, and under-development versions with interfaces subject to change. The v1alpha and v1beta endpoints from the developer preview will be disabled on May 1st, 2023, and users are advised to migrate to the v1 endpoints as soon as possible.

gRPC API allows users to generate images with a variety of parameters, some of which affect pricing. The required variable for calling the gRPC API is prompt. When provided alone, it returns a default-sized image (512x512) with default settings. The response will contain a finish_reason to indicate the outcome of the request, and if it is filter, it means the safety filter has been activated and the resulting image will be blurred.

Photoshop Plugin enables users to generate and edit images using both SD and DALL•E 2 directly within Photoshop. To get the latest version of the Stability Photoshop Plugin, you can either install it from the Adobe Exchange or download the CCX file directly. Installing the plugin from the Adobe Exchange is the easiest method.

With the Stability plugin for Photoshop, you can perform the following tasks:

Text-to-Image: Generate images from text using SD. To learn how to use this feature, follow the instructions on the "Learn how to use the Stability plugin for Photoshop to generate images from text, using SD" tutorial.

Image-to-Image: Generate image from your existing content using SD. To learn how to use this feature, follow the instructions on the "Learn how to use the Stability plugin for Photoshop to generate image from your content, using SD" tutorial.

Upscaler: Upscale your content with the Stability plugin for Photoshop. To learn how to use this feature, follow the instructions on the "Learn how to use the Stability plugin for Photoshop to upscale your content" tutorial.

Blender Addon allows you to use SD directly within Blender, your preferred 3D software. With this addon, you can generate textures, create AI videos from your renders, and more. The addon can be used in different contexts, such as the 3D View and the Image Editor.

To get started, you'll need to install the addon and enter your Stability API key. After completing the initial setup, you can use the addon for various tasks, including:

Render-to-Image: Generate image from your renders using the Stability addon for Blender. To learn how to use this feature, follow the instructions in the "Learn how to use the Stability addon for Blender to generate image from your renders" tutorial.

Generate Textures: Create image from your existing textures using the Stability addon for Blender. To learn how to use this feature, follow the instructions in the "Learn how to use the Stability addon for Blender to generate image from your existing textures" tutorial.

Animation: Generate animations from your videos using the Stability addon for Blender. To learn how to use this feature, follow the instructions in the "Learn how to use the Stability addon for Blender to generate animations from your videos" tutorial.

Upscaler: Upscale your rendered image and animations with the Stability addon for Blender. To learn how to use this feature, follow the instructions in the "Learn how to use the Stability addon for Blender to upscale your rendered images and animations" tutorial.

By following the tutorials and guidelines provided, you can enhance your 3D projects with the Stability addon for Blender.

Future Focus

Stability AI, along with its partners, is committed to enhancing and expanding the capabilities of Stable Diffusion. The goal is to improve the platform's language comprehension. This will enable it to generate image based on audio and video input. Additionally, the platform will explore potential applications in diverse fields, such as biology research.

As more people use Stable Diffusion, the platform will keep improving and adding more advanced features and functions.

Collaborations and Partners

The success of Stable Diffusion can be attributed to the collaboration between Stability AI, Runway, and CompVis. Their combined expertise has allowed the platform to flourish, with each organization contributing unique skills and knowledge. As they continue to work together, the possibilities for Stable Diffusion's future development are virtually limitless.

What is Dream Studio

DreamStudio is a simple tool that helps you create image using the latest version of the Stable Diffusion model. Stable Diffusion is a fast and efficient model that creates high-quality image from text input from users, understanding the connections between words and image. With DreamStudio, you can create any image you can imagine in seconds. Just type in your text prompt and click "dream."

Applications and Potential

SD can be used in many different industries and situations besides just creating image. Generating image from text descriptions can inspire innovation and creativity across a range of fields including

- Advertising

- Marketing

- Gaming

- Virtual reality

As technology improves, more applications may appear, showing how Stable Diffusion has value in many fields.

Education and Learning

Stable Diffusion can be a valuable tool for educators, providing a unique and engaging method of teaching various subjects. Teachers can use the platform to create image from students' written descriptions. Which can then be used to help them improve their writing and visualizing skills.

Students can also use the platform to explore their creative interests and experiment with different styles and image techniques.

Art and Design

Artists and designers can use Stable Diffusion to generate image for various projects, from digital art to product design. The platform has a feature that creates image from text descriptions. This allows users to quickly try out different concepts and styles. This streamlines the creative process and saves time.

Stable Diffusion has models that can copy different artistic styles. Artists can use these image models to learn new techniques or show respect for their favorite creators.

Entertainment and Media

Stable Diffusion may change the entertainment industry by creating image for movies, TV shows, and games. The platform can make the image production process faster and cheaper. Creating detailed and unique generative ai visuals from written descriptions, while still producing high-quality image graphics.

Companies in the entertainment sector have already adopted Stable Diffusion. The platform's influence on the industry is likely to grow.